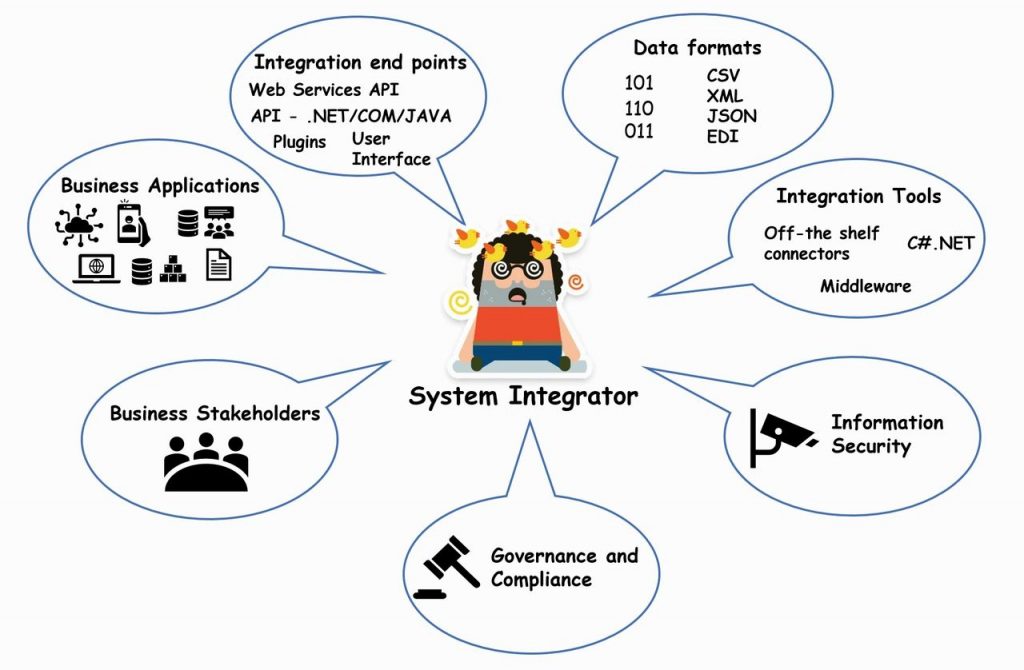

Effective System Integration for your Digital Transformation Project

A modern-day systems integrator working on an intelligent process automation project has a large number of tools and options available. It can be challenging to identify which system integration method to use and how to deploy them effectively. Combined with this, is an ever-increasing number of project stakeholders to please from the business to information security and compliance. Here we look at some of the ways in which system integration can be achieved and their relative merits.

Nowadays, organisations have several enterprise level business applications in use which typically are a mix of both legacy and modern applications running on-premise or in the cloud. Legacy applications may have been built in a bespoke manner or customised as the business has evolved. Enterprise level business applications can be based on different technology stacks and owned and managed by different entities. Some could be subscription based, managed by a 3rd party, or a perpetual licence running on on-premise infrastructure. Such characteristics can turn these business applications into information silos.

Applying automation to an end-to-end process may require interaction with one or more business applications to push/pull data in order to utilise business information. System integration enables business applications to share information and act as a coordinated whole, essentially singing from the same hymn sheet. This ensures that the benefits of these different information assets can be realised, delivers a pleasant user experience, and maximises ROI in terms of gained efficiencies, increased productivity, compliance, and cost savings.

Proper system integration ensures the business information flows seamlessly across these disparate systems so it can be utilised by business processes, thereby reducing risk for these systems becoming information silos.

System integrations should always be planned carefully as, without it the resulting pain can be felt later in the application lifecycle in terms of end user frustration, lack of user confidence, data duplication and possible data integrity issues. Automation platforms that poorly integrate with other systems can undo the efficiencies gained in the first place and could wipe out all expected benefits from the automation platform.

When it comes to system integration there is no simple answer! One must look at the business requirements, understand the systems to be integrated, current application landscape and then devise an approach which will work most effectively.

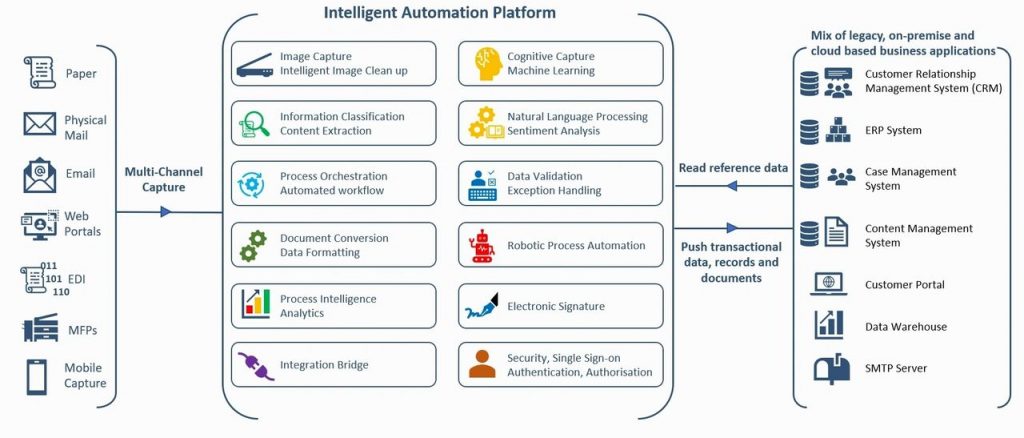

Consider the use case of Digital Mailroom automation. Inbound communications are received via various channels like paper, email, Web forms, EDI, and social media which require capture, classification, data extraction, validation, and routing to the right line of business system. Customers could be sending order forms via email or post, which require tagging with the correct customer account, extraction of order information and channelling to the Order Processing system. Invalid orders will require notification back to the customers with the identified issues.

The following diagram illustrates a number of applications residing in the ecosystem all of which rely upon smooth information sharing for the automation solution to work.

The following aspects should be considered as part of planning and analysis prior to making a decision on the integration approach:

7 Key Considerations for your Intelligent Process Automation Integration Project

1. Integration mechanism:

Technology advances and widespread availability of open standards, API, middleware applications and RPA (Robotic Process Automation) are continuously opening more avenues on how systems can be integrated. The importance of proper analysis and due diligence in selecting the right approach for integration should never be underestimated. Pros and cons of loosely and tightly couple approaches should be considered for the given integration use case and environment. Integration success criteria should be clearly defined, and detailed functional and non-functional requirements concisely documented. Any existing integration patterns and standards followed should be understood and considered.

a) File Exchange

Exchanging data files is one of the simplest methods of transferring data between systems. Data can be exchanged as CSV, JSON, XML or proprietary formats. This approach works best for one way, read-only, sharing of data which do not require updating frequently. But transferring data in a flat file is analogous to throwing data over the fence, with no acknowledgement of whether the target system ingested it successfully. This is not a reliable approach and is never recommended for critical information.

b) Database

Data can be written to a staging table which acts like a queue and is read by the target system, with records marked once processed successfully. The source application should periodically check for any failed transactions and take corrective actions. This approach is simple and reliable for use cases which do not require real-time feedback.

Data can be read from or written to the target application database tables directly, but this method should be adopted only if this is permitted by the application. Writing data directly to application database tables can inadvertently corrupt data if data is not pre-validated. Additionally, integration can break if an application upgrade changes the database schema.

c) API Integration

Most applications will expose a native Application Programming Interface (API) in the form of .NET assemblies, Java API, or traditional COM interfaces. Using native API creates a tightly coupled integration and it’s pros and cons should be considered. Tightly coupled integrations using COM may require code to be recompiled or reconfiguration if the COM interface compatibility breaks as a result of system upgrades. Fortunately, COM interfaces are becoming obsolete so no more nightmares of the ‘Runtime error 429 – ActiveX component can’t create object’.

.NET APIs are typically functionally rich and allow developers to achieve tight robust integration. Several codeless platforms or RPA tools provide the ability to interact directly with .NET assemblies using simple point and click configurations.

d) Web Services

Applications can expose a web API in the form of REST/SOAP Web services or HTTP GET/POST methods. This is more common with cloud-based applications and provides a very robust integration mechanism. Tools like Postman and SoapUI are immensely helpful in evaluating web API without writing code.

e) Off-the-shelf connectors

Off the shelf connectors or middleware can help and simplify certain aspects of integration. Middleware tools handle a number of integration aspects and facilitate communication between systems, maintaining the integrity of information across multiple systems. Sometimes these provide the quickest route but can be expensive. Also, these can have certain limitations and may not always address the integration use case. Due-diligence and prototyping should be carried out when using an off-the shelf connector.

f) Robotic process automation (RPA)

RPA systems can be highly effective in achieving integration in scenarios where an API is not available, or the API is too convoluted for the given use case. RPA tools can talk to both source and target applications from the presentation layer by imitating user actions.

Recently RPA has been showcased as the default strategy for all integrations. Whilst RPA is useful in certain scenarios, you should arrive at the conclusion as to whether to use it only after carrying out proper analysis, as opposed to choosing it blindly because it is available or the latest shiny tool.

2. Authentication & authorisation

How systems authenticate incoming requests should be an important consideration in the integration design. Target applications should always authenticate all incoming requests when interacting with another application. Authentication between system requests should be considered as equal in importance as it is for human users.

Typically, service accounts are configured for authentication and it is a common practice for these accounts to have password set to not expire. It can be challenging to mandate a password expiry policy on service accounts, as expired passwords require manual intervention to update them. (Resetting passwords and updating configuration files could be a good use case for RPA).

Using OAuth (Open standard for Authorization) is an alternative to username-password authentication which is based on an access token. It is good practice for tokens to be time limited, but a reliable mechanism should be employed to renew tokens.

Authentication should not be treated as a substitute for authorisation. All requests should be authorised to ensure only permitted actions and data access is allowed. For example, if reading from a database then the database account used should only have access to the specific tables required, and only have a read-only view if that is all that is required. Authorisation controls should be put in place to restrict access to only the API functions as needed.

It is also good practice to lock down access to specific IP Addresses or networks, to add another layer of security. The impact of integration end points on the security landscape should be reviewed as part of the initial integration design.

3. Data

a) Data Exchange

Data exchange is key to all integrations. We should plan carefully as to what data is exchanged and how it is perceived by the target application.

b) Data Mapping

Data fields should be mapped carefully across systems as the same data can be stored in different systems using different data tags. For example, Supplier identifier may be referred to as ‘VendorID’ in the ERP system but ‘SupplierID’ in the CRM system.

c) Data Caching

For system integration which synchronises datasets across different systems for reference and data validation, consider a data caching mechanism to stop overloading of the target system with repeated data request queries where access to real time data is not required. The data expiry window should be worked out carefully depending on how frequently the source data changes. For example, there will always be a lag between an insurance policy setup and receiving a claim form, so delay in any data synchronisation should be OK. However, on the other hand synchronising payment data between the payment gateway and the accounts system should always happen in real time.

4. Transaction Atomicity & closing the loop

When integrating with multiple systems in parallel, atomicity of the transaction should be kept in mind. Under no circumstances, should the data integrity be affected, and system should never be left in an inconsistent state.

For example, when posting invoice data in an ERP system and uploading the invoice image in a document management system, the integration layer should ensure both actions are successful as both elements being completed is necessary for the process to be successful.

5. Integration testing

Proper attention should be given to Integration testing. This would normally involve preparing detailed test cases with associated test data. It is a good practice to develop a test harness (test driver) to expedite testing and to utilise test automation where possible to carry out regression testing.

6. User Experience

One of the key non-functional requirements for integration should be based on user experience, which is an important aspect of successful system integration. This may be in the form of better user experience due to availability and seamless flow of data across systems reducing end user alt+tabbing and application switching.

7. Auditability

Sufficient audit trail and logs should be generated. These logs should be continually monitored for errors and are also imperative for subsequent fault finding and root cause analysis in case of an issue. Logs should be verbose enough to trace back the transaction states at various steps in the integration to allow for fast analysis and remedial action.

At Telic Digital, our consultants have experience of integrating a wide variety of applications with multiple approaches across hundreds of intelligent process automation projects. Should you find yourself wanting some assistance we can be contacted here.